Why Training AI Cars to Stop for School Buses Failed

Carmen López ·

Listen to this article~5 min

A school district's attempt to help train autonomous vehicles to recognize school buses revealed the complex gap between AI training and real-world human environments.

So here's something that happened recently that really makes you think about where we're headed with all this autonomous vehicle technology. A school district tried to help train Waymo's self-driving cars to recognize and stop for school buses. And well, it didn't work out like anyone hoped.

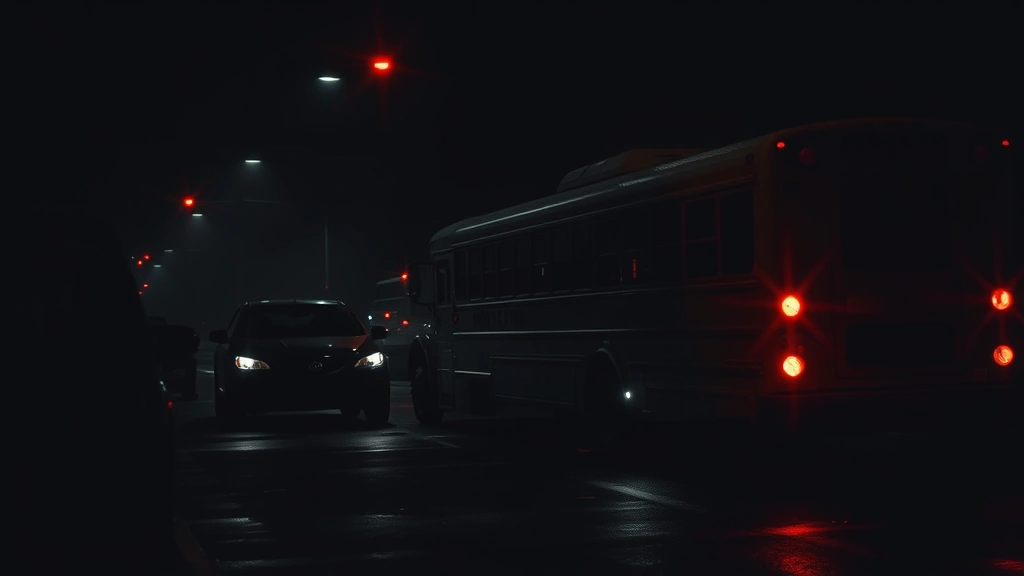

You'd think this would be straightforward, right? School buses are big, yellow, and have those flashing red lights. They're designed to be impossible to miss. But when it comes to teaching artificial intelligence systems to understand these real-world scenarios, things get complicated fast.

### The Real-World Challenge of AI Training

Here's the thing about training AI for autonomous vehicles—it's not just about programming rules. It's about teaching machines to interpret complex, unpredictable human environments. School zones present some of the most challenging scenarios: kids darting into streets, unpredictable traffic patterns, and those big yellow buses making unexpected stops.

The school district thought they could help by providing data and scenarios. They wanted to make their streets safer. But the gap between human understanding and machine learning turned out to be wider than anyone anticipated.

### Why Simple Solutions Don't Work

You might wonder—can't they just program the cars to stop for anything yellow and flashing? It's not that simple. Autonomous vehicles need to:

- Distinguish between school buses and other large yellow vehicles

- Understand when a bus is actively loading or unloading children

- Recognize the difference between a bus with lights flashing and one without

- Navigate around stopped buses without disrupting traffic flow

Each of these requires thousands of hours of training data and real-world testing. And even then, edge cases pop up that the system hasn't encountered before.

### The Human Element in Machine Learning

What's really interesting here is how this situation highlights the difference between human intuition and algorithmic decision-making. We humans see a school bus and immediately understand the context—we know children might be present, we recognize the potential danger, and we adjust our behavior accordingly.

For AI systems, every scenario needs to be explicitly taught. There's no intuition, no gut feeling, no ability to read subtle social cues. The system only knows what it's been trained on, and school bus scenarios involve so many variables that comprehensive training becomes incredibly difficult.

As one expert put it: "We're asking machines to understand human environments that even humans sometimes struggle with."

### What This Means for the Future of Autonomous Vehicles

This isn't just about school buses. It's about all the unexpected situations autonomous vehicles will encounter. Think about:

- Construction zones with temporary signs

- Emergency vehicles approaching from different directions

- Pedestrians using crosswalks versus jaywalking

- Animals crossing roads

- Weather conditions affecting visibility

Each of these requires specialized training, and we're discovering that our roads are far more complex than we realized when we started this autonomous vehicle journey.

### The Path Forward for AI and Public Safety

So where do we go from here? This experience suggests we need:

- More collaboration between tech companies and local communities

- Better simulation environments that can recreate rare but critical scenarios

- Ongoing testing in diverse real-world conditions

- Transparent communication about system limitations

It's going to take time. The promise of autonomous vehicles is incredible—reduced accidents, increased mobility for those who can't drive, more efficient transportation systems. But we're learning that getting there requires solving thousands of small problems, not just a few big ones.

The school bus situation is a reminder that technology doesn't exist in a vacuum. It operates in our messy, unpredictable human world. And bridging that gap between perfect algorithms and imperfect reality? That's the real challenge ahead.

What's encouraging is that people are trying. School districts are engaging with tech companies. Communities are providing feedback. We're all learning together how to make this technology work safely in our neighborhoods.

It might take longer than we expected, but that's okay. When it comes to safety—especially children's safety—taking the time to get it right is what matters most.